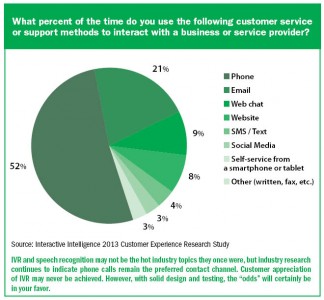

IVR and Speech Recognition

As I navigated my way through the seemingly endless maze of machines and retirees in Hawaiian shirts at the local casino in Tampa, Fla., I was on a mission. While the polyphonic chaos of coins dropping into a winner’s tray, bells and chimes sounding, and the repetitive chanting of “Wheel of Fortune” made it nearly impossible to hear, it was music to my ears. Although I’m no high-roller, it was exactly what I had hoped for. I had gambled that my operation would benefit significantly from the use of interactive voice response (IVR) self-service and speech recognition.

Perhaps a little background is appropriate. In late 2005, I took responsibility for a multisite contact center operation in the financial services industry. The focus of the organization that I led was point-of-sale payment authorization, primarily paper. Industry trends indicated that these transactions were gradually eroding in favor of alternative forms of payment. However, two areas within this space—payroll check cashing and gaming cash access—were growing rapidly. A significant portion of our calls were relatively simple and of short duration, and the callers were typically power users. In an effort to reduce expenses to maintain or even perhaps grow margin despite the erosion of our core business, I built a business case and proceeded to internally sell my executive team on moving forward. I pitched three initial applications with very conservative expectations of success. The case was extremely compelling and I was able to move forward with the project.

As I am sure you all have likely experienced, the process of vendor selection, contract negotiation and approval, application requirements gathering, development and testing always takes longer than anticipated. By summer 2007, we were ready to go live. We started with our core point-of-sale business. The result was, as I had hoped, significantly better than expected IVR self-service completion. I am not going to pretend that there was not pushback. With the introduction of IVR self-service, there will always be those who work diligently to avoid it no matter how well it works. However, my project team had worked hard to follow best practices, build our IVR persona, make it simple to use and put our solution through the paces prior to going live. Given the value we were experiencing, the pushback we received was only a murmur and certainly something we were prepared to address. Life was good!

Next on the project plan was the implementation of the same successful application in front of our two growing channels. And next on our communication plan, we moved to share the metrics detailing our success and firm up the “go-live” date with our business partners in the Cash Access division.

Wait, what?

“We are not willing to move forward with speech recognition self-service.”

“Did you not see the compelling data I sent along with my email? The results are incredible.”

“Speech recognition will not work in a casino. There is too much background noise.”

In support of those who led our Cash Access business, I did understand their concern. Based on my experience, cash access to casino leadership is perhaps unparalleled in level of importance. Any process, technology or vendor, for that matter, that potentially interrupts their guests’ ability to play is viewed with extreme seriousness. Nonetheless, I was bound and determined to realize the full potential of this solution. I needed to learn firsthand if the buzz of a bustling casino degraded the recognition of my application.

The following are some of the myths, mistakes and tips that I gathered during my experience. I hope that these insights will help to build confidence in a technology tool that I feel has forever gotten a bad rap.

MYTH: Speech recognition does not recognize what I say and does not work with background noise

Despite living in Tampa for eight years, I had never visited the casino. So I walked nearly every inch of the gaming floor, completing one successful test after another. The background noise was so loud in some areas that I could barely hear my persona’s voice prompt, a clue to what drives the first myth.

Recognition in a speech application is supported by a grammar file for each collection. While there are a number of other settings related to timing and confidence level, a grammar file is what the speech engine compares the caller’s utterance to in order to determine a match. I was very familiar with my application and I knew what my application wanted me to say. More often than not, the success of the speech recognition engine is not that it didn’t recognize what the caller said, but rather the caller said something the grammar file did not contain or could not match to something in the grammar file.

MISTAKE: Failing to routinely tune a speech application

Prior to speech applications, touch-tone or DTMF IVR applications were largely designed, developed, and implemented just once. Once trained, an electronic agent needs little to no follow-up training. Not so in a speech environment. Speech applications require periodic tuning and analysis. Initial tunings will yield numerous opportunities to improve performance, and subsequent tunings will continue to produce opportunities. I believe two to three tunings in the first year of a new speech implementation is appropriate. For more mature applications, one to two per year will suffice.

Armed with the success of my field test, I gained the confidence required to successfully negotiate the implementation. We successfully reduced our cost of service and experienced very few customer complaints post-implementation. My gamble paid off!

More recently, as a contact center consultant, I have had the good fortune to visit many customers and learn of their successes as well as their challenges with IVR, both touch-tone and speech recognition. I noticed a number of common themes related to IVR design and deployment. With each engagement, I follow a fairly consistent process. First, I spend time with those individuals responsible for the client’s caller experience, typically an IT voice services resource, a business analyst or a leader in the contact center, and in some cases, a combination or all of the above. I ask that they walk me through existing call flow. We generally discuss what they hope to accomplish, how they evaluate success, how often they modify their IVR, and perceived challenges. I also explore what went into design, how they tested their solution, and if they continue to test and evaluate their IVR performance. Next, I sit side by side with their agents, observing their actions and listening.

MISTAKE: Failing to utilize your internal experts

One common theme that seems to present itself frequently is that the IVR caller experience is designed very differently than the way an agent handles the same inquiry. The data collected, the order it is collected, the manner in which it is presented back to the caller, are very often dramatically different. I will leave you to ponder which approach is more effective and efficient.

TIP: Engage your agents in design, testing and on-going evaluation

Generally speaking, I believe those who develop requirements for new IVR applications that don’t include agents perhaps fear the repercussions of agent involvement. They may believe that the agents will fear replacement, with a resultant dip in morale or perhaps a growth in attrition. Why else would you not invite an expert to the table? Your best agents know what to ask for, how to ask for it, and how to best navigate the desktop. They do it constantly. Your quality program should bear that out. I believe, to the contrary, that involved and informed agents will adapt to change better than agents left in the dark. In the absence of information, it is human nature to let imagination roam free. The sooner your communication plan includes your agents the better. Additionally, if one of your goals with IVR is to reduce or repurpose contact center staff, those that you include in the design are not likely the resources that will be negatively impacted.

A second theme that emerges relates to testing of IVR applications. In nearly all cases, the typical IT project testing practices are followed culminating in user acceptance testing (UAT). Very often I find that the UAT test resources are the same set of resources that designed the application, or are internal resources that, much like me in the casino, are very familiar with the business and perhaps the application.

A second theme that emerges relates to testing of IVR applications. In nearly all cases, the typical IT project testing practices are followed culminating in user acceptance testing (UAT). Very often I find that the UAT test resources are the same set of resources that designed the application, or are internal resources that, much like me in the casino, are very familiar with the business and perhaps the application.

TIP: Engage your customers in testing

I am a big advocate of “usability” testing. Once you feel your application is tested and ready for production, engage your customers in testing and providing feedback. Provide them with scenarios and test data. Solicit their feedback in areas such as simplicity, pace, clarity of self-direction, recognition (they may be responding with something you didn’t anticipate), etc. If customers are not an option, collaborate with your local government’s workforce assistance agency to provide some eager temporary labor and garner some good will for your company. The feedback you receive from usability testing is extremely valuable and it beats learning of caller experience challenges once your application is in production.

The goal of IVR generally comes down to two fundamental objectives:

1. Quick and efficient identification of the caller, and his or her reason for reaching out to you; and delivery of the call to the resource best prepared to handle the interaction.

2. Self-service designed to allow the caller to complete their interaction without reaching an agent.

If executed well, both objectives can deliver an improved caller experience while delivering savings to your bottom line.

MISTAKE: Allowing a caller to leave a message is not self-service

I see this often when evaluating IVR applications. Callers are given the option while interacting with the IVR to record a message for an individual, or more commonly, to update information typically challenging for the IVR to collect. Generally speaking, I see this practice in contact centers that have difficulty meeting their service level targets. I understand the driver behind delivering this capability as it may be considered desirable from a caller’s perspective as opposed to waiting in queue for an agent to come available. My objection to this practice is the operations processes that follow.

I have seen variations to what follows, but typically a small team of agents is responsible for listening to the voicemails and completing the requested changes. Simple enough, right? Besides the fact that, in my opinion, this practice introduces a new point of failure, allow me to break down my concern with this approach. Primarily, when I inquire how this workload is tracked, I almost universally get the same response. Something akin to a “deer in the headlights” look, followed by statements such as “we don’t” or “we keep counts.” Examining the process further, more often than not the agents who monitor the voicemail boxes are usually senior or lead agents who are no longer answering “live” customer interactions, a topic unto itself that I will reserve for a future issue. Additionally, these agents are generally instructed to complete this activity while logged out or in an off-phone state. In summary, workload that is highly measurable and critical for inclusion in any contact center workload-sizing process is now a black hole consuming perhaps the most efficient agent population. In many cases, when I inquire how center leadership knows that this work has been completed and how they evaluate for quality, I get that same look again.

MYTH: The industry standard for IVR success

I am asked about an industry standard to measure IVR success just about every time I meet with a customer to review their IVR performance. In the past, I am sure I asked the aforementioned question myself. My answer is, “It depends.” As an operations manager, I cringe a little each time I say it. However, my experience supports that answer.

Using the project mentioned earlier in this piece, I was implementing three applications serving very different business needs:

1. An authorization application that was simple, of short duration and highly self-service capable, dialed by power users very familiar with the interaction. I had very high expectations for “completion” within this application.

2. A consumer disclosure application that was legally required, purposely vague, delivered to frustrated or angered unfamiliar callers, and provided little opportunity to self-serve. I had very low expectation for this application, but any “deflection” was very beneficial.

3. A pay-by-tel application that greeted callers responding to collections dunning activity. I had no idea what to expect with this application. Would callers prefer to interact with the IVR, paying their debt quickly and quietly? Or might they prefer to speak to a “live” agent stating their case or making payment arrangements. As you might expect, it was a mixed bag.

In summary, just as the expectations for each of these applications varied, the success of your IVR is based on your unique situation. Influencers such as caller demographics, call types, available alternatives, time of day, day of week are just a few of the many factors that will drive your application success.

Chip Funk is a Contact Center Solutions Consultant at Interactive Intelligence.